After a while this can start to incur additional load on the database.

However, at scale the metadata starts to accumulate pretty fast. In a normal-sized Airflow deployment, performance degradation due to metadata volume wouldn’t be an issue, at least within the first years of continuous operation. Increasing Volumes Of Metadata Can Degrade Airflow Operations This allows you to optimize your environments for interactive DAG development or scheduler performance depending on the requirements. Airflow is highly configurable and offers several ways to tune the background file processing (such as the sort mode, the parallelism, and the timeout). For example, we allow users to upload DAGs directly to the staging environment but limit production environment uploads to our continuous deployment processes.Īnother factor to consider when ensuring fast file access when running Airflow at scale is your file processing performance. Additionally, we can use Google Cloud Platform’s IAM (identify and access management) capabilities to control which users are able to upload files to a given environment. This also allows us to conditionally sync only a subset of the DAGs from a given bucket, or even sync DAGs from multiple buckets into a single file system based on the environment’s configuration (more on this later).Īltogether this provides us with fast file access as a stable, external source of truth, while maintaining our ability to quickly add or modify DAG files within Airflow. This script runs in a separate pod within the same cluster. We wrote a custom script which synchronizes the state of this volume with GCS, so that users only have to interact with GCS for uploading or managing DAGs. We then mounted this NFS server as a read-write-many volume into the worker and scheduler pods. The volume of reads was especially high because every pod in the environment had to mount the bucket separately.Īfter some experimentation we found that we could vastly improve performance across our Airflow environments by running an NFS (network file system) server within the Kubernetes cluster. However, at scale this proved to be a bottleneck on performance as every file read incurred a request to GCS. Our initial deployment of Airflow utilized GCSFuse to maintain a consistent set of files across all workers and schedulers in a single Airflow environment. This means the contents of the DAG directory must be consistent across all schedulers and workers in a single environment ( Airflow suggests a few ways of achieving this).Īt Shopify, we use Google Cloud Storage (GCS) for the storage of DAGs. These files must be scanned often in order to maintain consistency between the on-disk source of truth for each workload and its in-database representation. A well defined strategy for file access ensures that the scheduler can process DAG files quickly and keep your jobs up-to-date.Īirflow keeps its internal representation of its workflows up-to-date by repeatedly scanning and reparsing all the files in the configured DAG directory. File Access Can Be Slow When Using Cloud Storageįast file access is critical to the performance and integrity of an Airflow environment. As a result of this rapid growth, we have encountered a few challenges, including slow file access, insufficient control over DAG (directed acyclic graph) capabilities, irregular levels of traffic, and resource contention between workloads, to name a few.īelow we’ll share some of the lessons we learned and solutions we built in order to run Airflow at scale. As adoption increases within Shopify, the load incurred on our Airflow deployments will only increase. This environment averages over 400 tasks running at a given moment and over 150,000 runs executed per day.

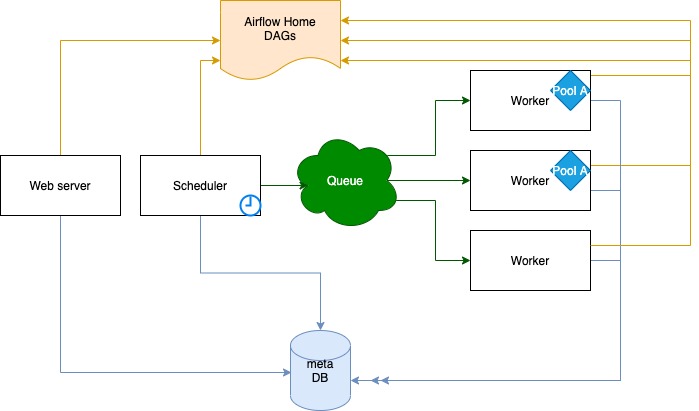

In our largest environment, we run over 10,000 DAGs representing a large variety of workloads. Shopify’s usage of Airflow has scaled dramatically over the past two years. At the time of writing, we are currently running Airflow 2.2 on Kubernetes, using the Celery executor and MySQL 8. At Shopify, we’ve been running Airflow in production for over two years for a variety of workflows, including data extractions, machine learning model training, Apache Iceberg table maintenance, and DBT-powered data modeling. Apache Airflow is an orchestration platform that enables development, scheduling and monitoring of workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed